Automation does not imply joblessness

The ‘ironies of automation’ applied to large language models

Are you worried about your job being taken over by AI? A slew of recent studies suggest you should be. Among the headlines:

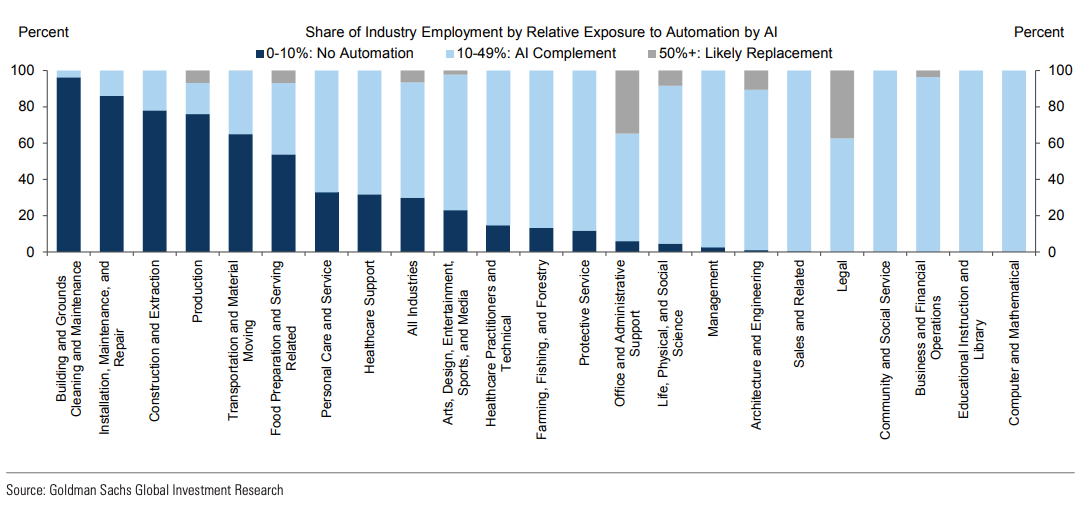

Goldman Sachs have speculated that if generative AI continues its march, it will ‘expose’ 300 million jobs to automation. The jobs most at risk are those where more than half of tasks can be automated; those where automation affects 10-49% of tasks are likely to see AI play a more complementary role.

Another study, co-authored by OpenAI, predicts that 80% of the workforce will have at least 10% of their work affected, and 19% will have at least 50% affected. They cite the versatility of large language models, characterising GPT as a ‘general purpose’ technology’ that means it will impact white-collar workers of every hue.

Stanford’s most recent index report notes that 50% of companies had already adopted AI in at least one business function by the end of 2022 - a number that has surely risen as the AI arms race has taken flight this year.

The appetite for automation among managers is strong, with one survey suggesting that as many as two-thirds would gladly replace employees with AI alternatives if the outputs are comparable.

Automation is inevitable, joblessness is not

The vocabulary underpinning much of the automation research is important - ‘exposure’ simply means that large language models will interact with your role, displacing and reshaping individual tasks rather than taking over completely. The security of your job will ultimately depend on how the counteracting forces of substitution and complementarity play out.

The bar for outright substitution is exceedingly high; it presumes a level of competence that large language models are not poised to achieve. I write those words with some caution, because LLMs continue to surprise us with their newfound capabilities. Yet they remain shrouded in mystery; even as we laud their ‘emergent’ behaviours, such their responsiveness to chain-of-thought prompting, we have no satisfactory explanation of how such behaviours come about, or whether these systems will extend their performance to new situations (Samuel Bowman’s ‘Eight Things to Know About Large Language Models offers a brilliant elaboration of these points).

Large language models appear very intelligent in the aggregate, but are liable to inexplicable mistakes on any given task. They are both impressively smart and inexplicably dumb, meaning they need careful supervision, and reining in when they threaten to overreach. In healthcare, for instance, ChatGPT shows remarkable diagnostic capabilities, but struggles with basic maths and note-taking. Is that the kind of doctor you would trust your care into?

The opaqueness of these systems, and the inherent unpredictability they possess at large scale, is precisely the reason humans need to be kept in the loop, even at the helm. Programmers are enjoying a productivity boon with chatbots as their ‘copilots’, but I have yet to encounter one who has ceded their coding skill altogether. Copywriters may turbocharge their content with AI ‘assistants’ but still have to check the automated output is contextually appropriate.

The ironies of LLM-based automation

Where there is the promise of automation, there is a need for human oversight and intervention. This is among the ‘ironies of automation’ that cognitive psychologist Lisanne Bainbridge wrote of in her 1983 paper of the same name. Drawing on examples from process industries and flight-deck automation, Bainbridge observed that the contribution of human operators is crucial for more advanced systems, as they are called on to intervene when these systems break down or behave in unexpected ways. Even if their contribution is sporadic and fleeting, human operators must never allow an erosion of their know-how of manual processes, lest they be called upon when automated systems fail.

The same may apply to today’s large language models, given their tendency to go off the rails. The extent of OpenAI’s ‘red-teaming’ efforts (inadequate as they surely are), and the push towards integrating chatbots with plugins that compensate for their lack of real-world grounding, is recognition enough of the blind spots these technologies possess. The role of humans - in engineering prompts, analysing outputs, debugging, stamping out strange and threatening behaviours - is unlikely to disappear any time soon.

This is no time for complacency, of course, and some degree of disruption to work is a given. I am absolutely convinced of the productivity gains afforded by large language models, which is bound to exert downward wage pressures throughout the knowledge economy. ChatGPT may not displace an entire team of programmers or copywriters or medics, but it may encourage companies to reduce headcount as they expect more output per individual.

Even here, other ironies of automation may kick in. As individuals become more productive, they will find more work to do (what writer Oliver Burkeman terms the ‘efficiency trap’). Yet another irony is the tendency of disruptive technologies to usher in new types of work. The Goldman Sachs paper acknowledges the historical precedent for this, citing economist David Autor’s finding that around 60% of workers today occupy roles that did not exist in 1940, ‘implying that over 85% of employment growth over the last 80 years is explained by the technology-driven creation of new positions.‘

The future of work is not set in stone. Our best safeguard against a jobless existence is to scrutinise AI’s tools beyond the headline-grabbing claims, to identify their pitfalls, and to vigorously assert ourselves into a collaborative dynamic with them. Their blind spots can be our strengths.