The first thing that hits you about ChatGPT is its unwavering confidence. The chatbot experiences no hint of writer’s block - feed it a prompt and it will duly respond in a matter of seconds. With the same loquaciousness, it will occasionally own up to (some of) its blind spots. For instance, if you ask ChatGPT who won the Football World Cup last month, it will respond by acknowledging it has only been trained on data up to 2021 (though, suspiciously, it does seem to know of Elon’s recent takeover of Twitter).

Exceptional cases aside, ChatGPT exudes such authoritativeness that readers could be forgiven for thinking its responses are the epitome of wisdom. I put this to the test with a retrospective thought experiment. If ChatGPT was available when I wrote my book Mathematical Intelligence, how might the content have changed?

Rewriting history

Like any non-fiction book, Mathematical Intelligence draws heavily on declarative knowledge (the kind that is easily verified, or falsified, through sound research), such as milestones in the evolution of AI. It felt appropriate to ask ChatGPT for its own take on AI history, not least because it is now a big part of it. ChatGPT complies to my specified format of a bulleted summary of up to eight key events:

This does read like a succinct account of AI’s Greatest Hits. Seven of the examples are indeed mentioned in the book (the fourth item on neuromorphic chips did not surface in my research). I am surprised by the omission of Alan Turing’s seminal 1950 paper ‘Computing Machinery and Intelligence’, from which the famed Turing Test is sourced. One item catches my attention most of all. AlphaGo’s victory, momentous as it was, deserves its place on the list. But ChatGPT is out by two years; that triumph actually came in 2016 (it also gets the year wrong for GPT-2, which was released in 2019).

When I push ChatGPT on the AlphaGo point, it plainly confesses to its error - a mark of humility, perhaps. Worryingly, when I (deliberately and incorrectly) suggest the year was 2015, ChatGPT is only too eager to agree. Not humble as much as impressionable and obsequious to a fault.

There is undeniably a ‘truthiness’ to ChatGPT; its answers are abundant, its tone measured, and its default style arguably less ‘bursty’ than human-written prose. But lapses like the one above, and the ease with which ChatGPT acquiesces to my own false claims, suggests its worldview is premised on shakier foundations than it lets on. This should not come as a surprise, as the output of large language models is determined by statistical patterns they have picked up in their training data. They will opt for what is likely or plausible, which is quite apart from what is factually correct. Even competent journalists are coming unstuck, with tech site CNET forced to correct basic mathematical errors in an AI-generated piece.

It is no surprise, then, that ChatGPT has been deemed forbidden fruit in many quarters. Q&A website Stack Exchange issued a ban onChatGPT-generated responses in December after it became saturated with incorrect answers to technical questions. Educators are nervous, too: access to ChatGPT has been blocked in 1,851 public schools in New York, on the grounds that it fosters plagiarism of the worst kind (is there a good kind?), with students copying and pasting falsehoods into their essays. If I had invoked ChatGPT’s output in my earlier example, I would rightly be rebuked for getting my facts muddled - a hit rate of 6 out of 8 doesn’t cut it for non-fiction writers.

How chatbots are getting their facts straight

What can large language models do to get their facts straight? Humans employ different frameworks for distinguishing truth from fiction. In mathematics, for instance, we apply the mechanism of deductive reasoning to move from one claim to the next, accounting for every assumption and leap of logic (a kind of thinking that ChatGPT so far struggles with).

Away from the abstractions of mathematics, arguments of the real world rely on a different kind of knowledge-verification system: referencing. Make a claim, cite a source (a credible one, ideally). The reader wants to know where your ideas come from, as well as how you are using, modifying or extending them in your own bid for originality. In short, referencing gives us a sense of the writer’s knowledge landscape. ChatGPT’s ‘knowledge landscape’ appears to be a smooth, undifferentiated terrain, where even the most absurd claims are presented as plausible, incontestable. This makes referencing more urgent: we need to understand what sources have informed its authoritative answers, and - by extension - what might have been omitted.

There is a growing appetite among purveyors of large language models to integrate referencing into their tools. Historically, search engines have directed us to relevant sources, while chatbots now produce a summary drawn from the multitude of sources they have been trained on. Conversational search promises the best of both. It is widely reported that Microsoft intends to combine ChatGPT with its Bing search engine, putting Google on high alert. Smaller players such as You and Perplexity are already showing how these two paradigms combine: they will not only direct your search terms to relevant websites, but also provide a longform answer, with references to boot.

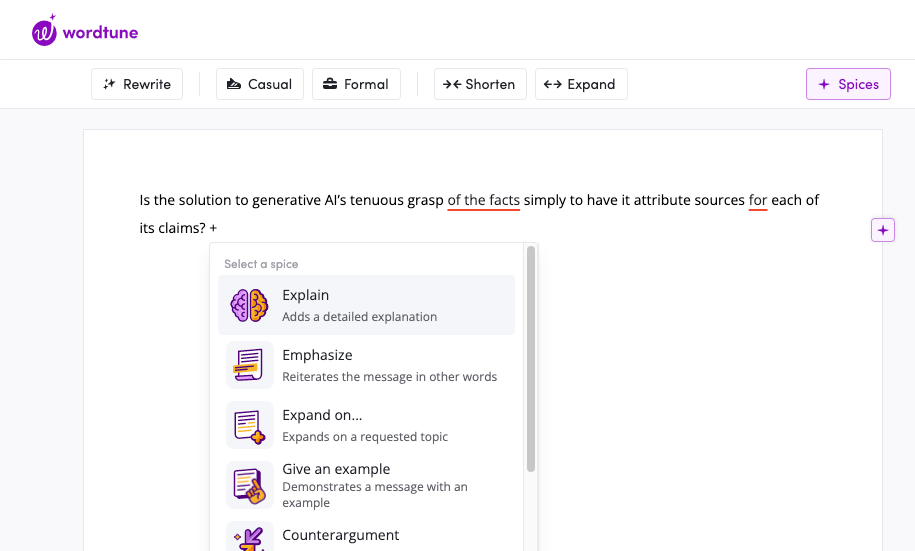

Is the solution to generative AI’s tenuous grasp of the facts simply, then, to have it provide a reference for every claim it makes? This is certainly the idea behind Wordtune Spices, which uses its own large language model to supercharge autocomplete. Wordtune offers the writer more agency to direct outputs: rather than serving up a fully-fledged response in one swoop, it generates text on a more granular basis, at the writer’s behest. Most importantly, for every morsel of information it generates, Wordtune offers up a source. A121 labs, the company behind the product, claims this feature solves the 'realistic looking but inaccurate information' problem of large language models.

There is much work to be done to fuse search and synthesis: when I asked You for its own take on the history of AI, every one of its points was taken from a single Forbes article - top marks for accuracy and transparency, but hardly displaying ‘evidence of wider reading’, that hallowed trait of top-tier students. This is bound to improve as approaches like retrieval augmented generation take off, where chatbots base their response on a curated set of quality assured documents.

Too much of something is no good thing

The efficiency gains of these technologies are obvious; at our fingertips we have the most prolific of writing assistants. As they get a better grip on the facts, we may not even have to work very hard to validate our sources, as ‘the AI’ will have taken care of that already. Writers who did not previously bother to identify or corroborate their sources (or resented having to do so) will feel vindicated as that aspect of writing becomes automated. And surely all of us, bar a handful of academics, will be grateful to bypass the tedium of formatting our citations.

But there is a risk that referencing alone is viewed as the solution to the opaque authoritativeness of large language models. Attributing sources is crucial to validating our claims, to acknowledging the originality of others: it is also the bare minimum that we expect of any credible non-fiction text. What remains in play is how we position ourselves in relation to writing technologies. Just as overuse of calculators can dull our intuitive sense of numbers, an automated referencing tool may incline us towards accepting drip-fed sources as gospel. We should approach these texts equipped with a grasp of their subject matter, so that we can parse the credibility of their citations. We must come prepared to investigate those references further, so that this secondhand knowledge becomes 'our own'.

A writer’s primary weapon is their agency. A reader’s too, for that matter. The boon of automated attribution must be embraced with caution, and a willingness to aggressively reinsert ourselves into the writing process.